The Equivalence of Weighted Kappa and the Intraclass Correlation Coefficient as Measures of Reliability - Joseph L. Fleiss, Jacob Cohen, 1973

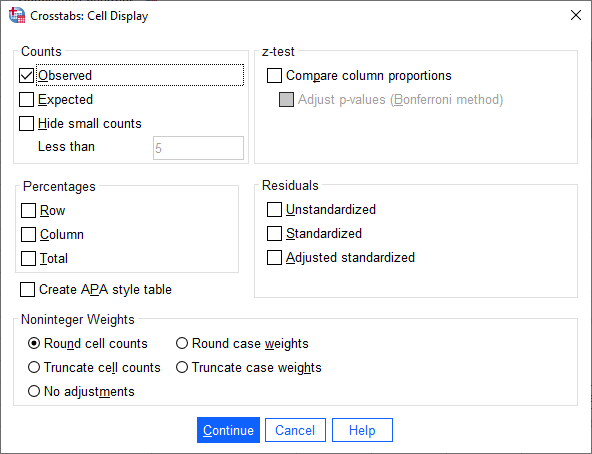

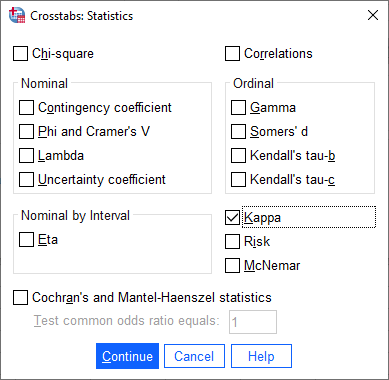

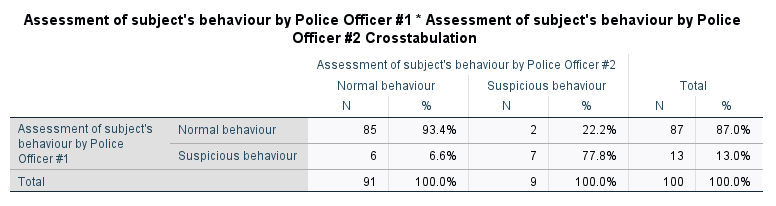

Cohen's kappa in SPSS Statistics - Procedure, output and interpretation of the output using a relevant example | Laerd Statistics

Kappa values and their interpretation for intra-rater and inter-rater... | Download Scientific Diagram

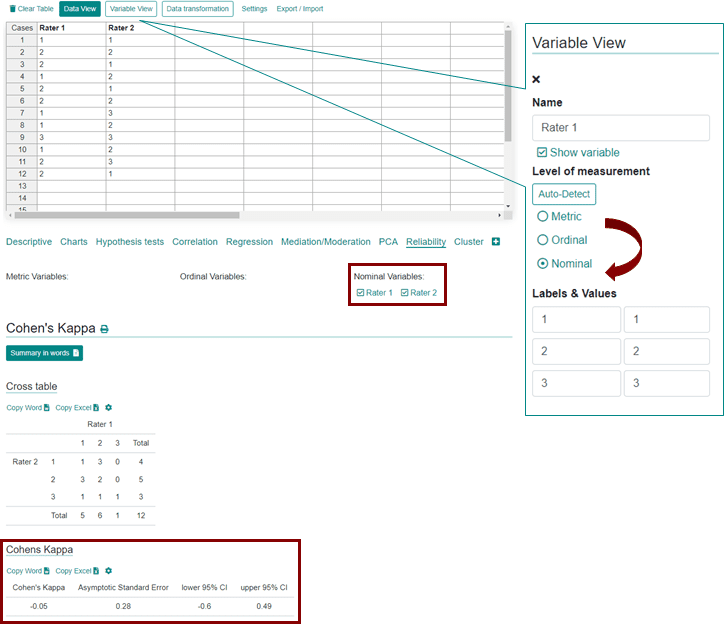

Measuring inter-rater reliability for nominal data - which coefficients and confidence intervals are appropriate?

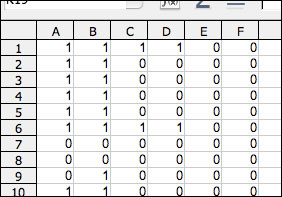

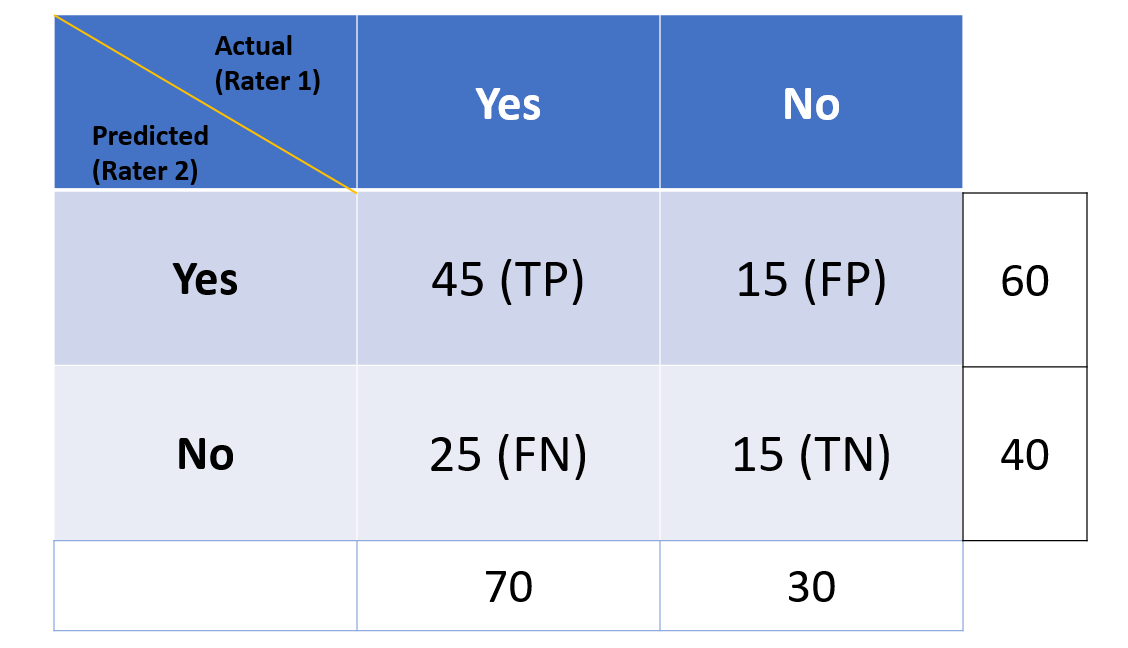

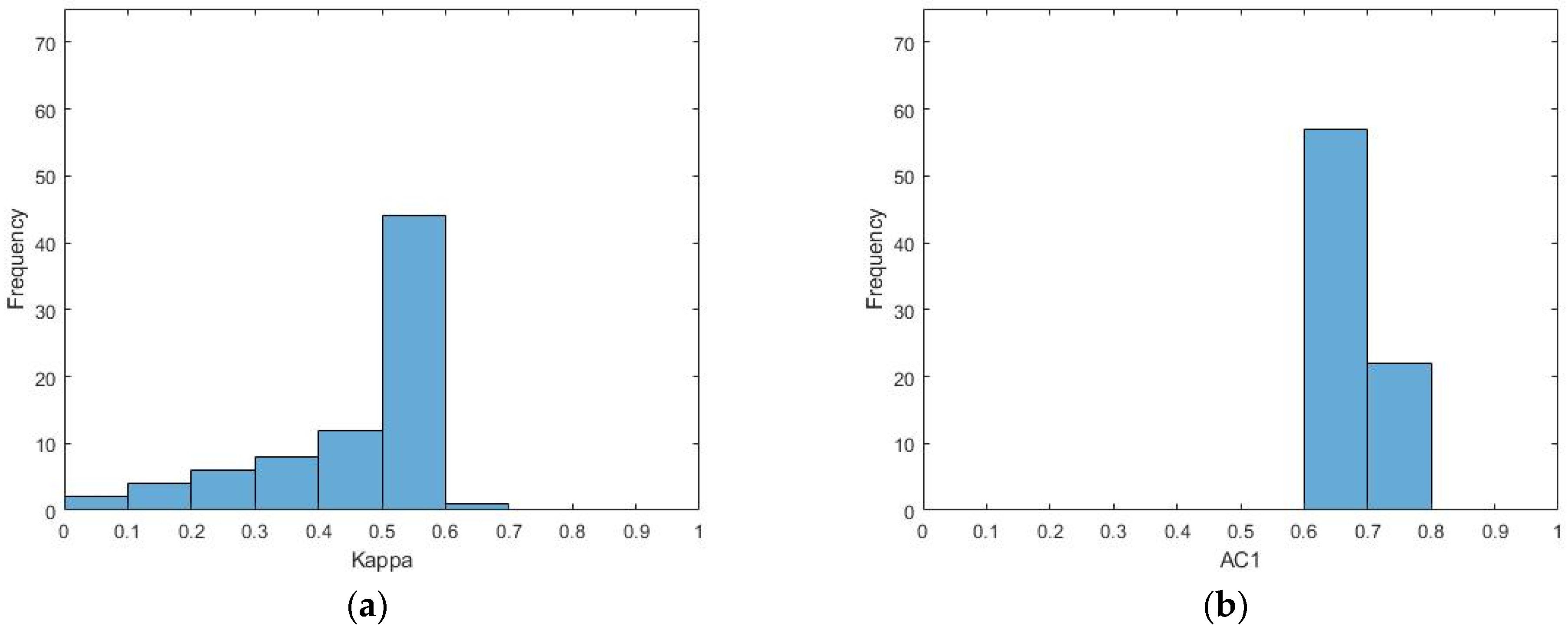

Symmetry | Free Full-Text | An Empirical Comparative Assessment of Inter- Rater Agreement of Binary Outcomes and Multiple Raters

Inter-rater agreement Kappas. a.k.a. inter-rater reliability or… | by Amir Ziai | Towards Data Science

Cohen's kappa in SPSS Statistics - Procedure, output and interpretation of the output using a relevant example | Laerd Statistics

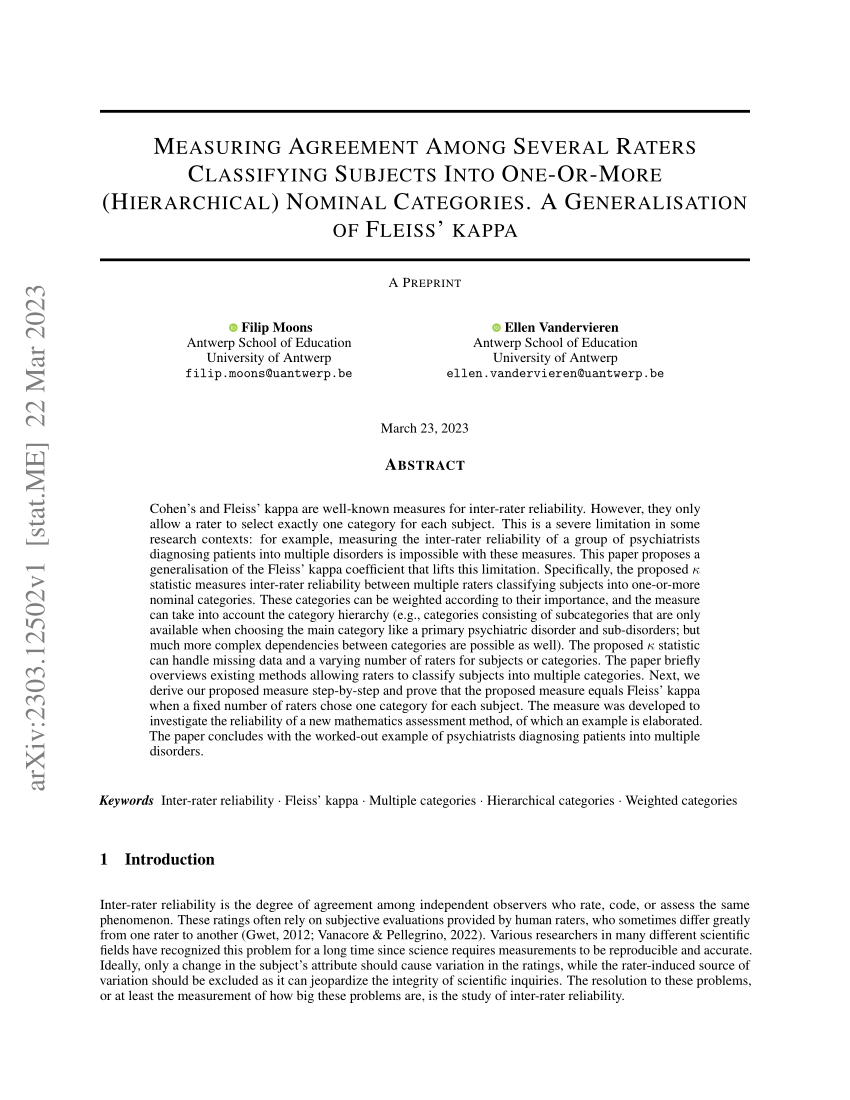

PDF) Measuring agreement among several raters classifying subjects into one-or-more (hierarchical) nominal categories. A generalisation of Fleiss' kappa

![PDF] StaTips Part III: Assessment of the repeatability and rater agreement for nominal and ordinal data | Semantic Scholar PDF] StaTips Part III: Assessment of the repeatability and rater agreement for nominal and ordinal data | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/bd16d5faf36c6c90b4a5936b806f1aca3bd78877/2-Table1-1.png)

PDF] StaTips Part III: Assessment of the repeatability and rater agreement for nominal and ordinal data | Semantic Scholar